From software eating the world, it is now AI eating the world!

March of Chat-GPT continues unabated and causes heavy expansion of AI/ML into our everyday lives.

WHY ZETTABOLT?

With expertise in various stages of AI/ML projects, right from data cleanup to model creation to feature engineering to model fine-tuning and deployment, Zettabolt is best suited for engagements which need high quality outcomes and predictable delivery. We are well versed in Generative AI technologies built on top of various commercial and open-source Large Language Models (LLMs) and have delivered customer projects across industry segments.

Our team of trained Data Science and AI/ML specialists can quickly understand customer requirements and deliver projects in significantly less time and cost. Having delivered projects for top Fortune-500 customers, delivering as per commitment is in our DNA!

WHAT ZETTABOLT OFFERS

DATA &

MODELING

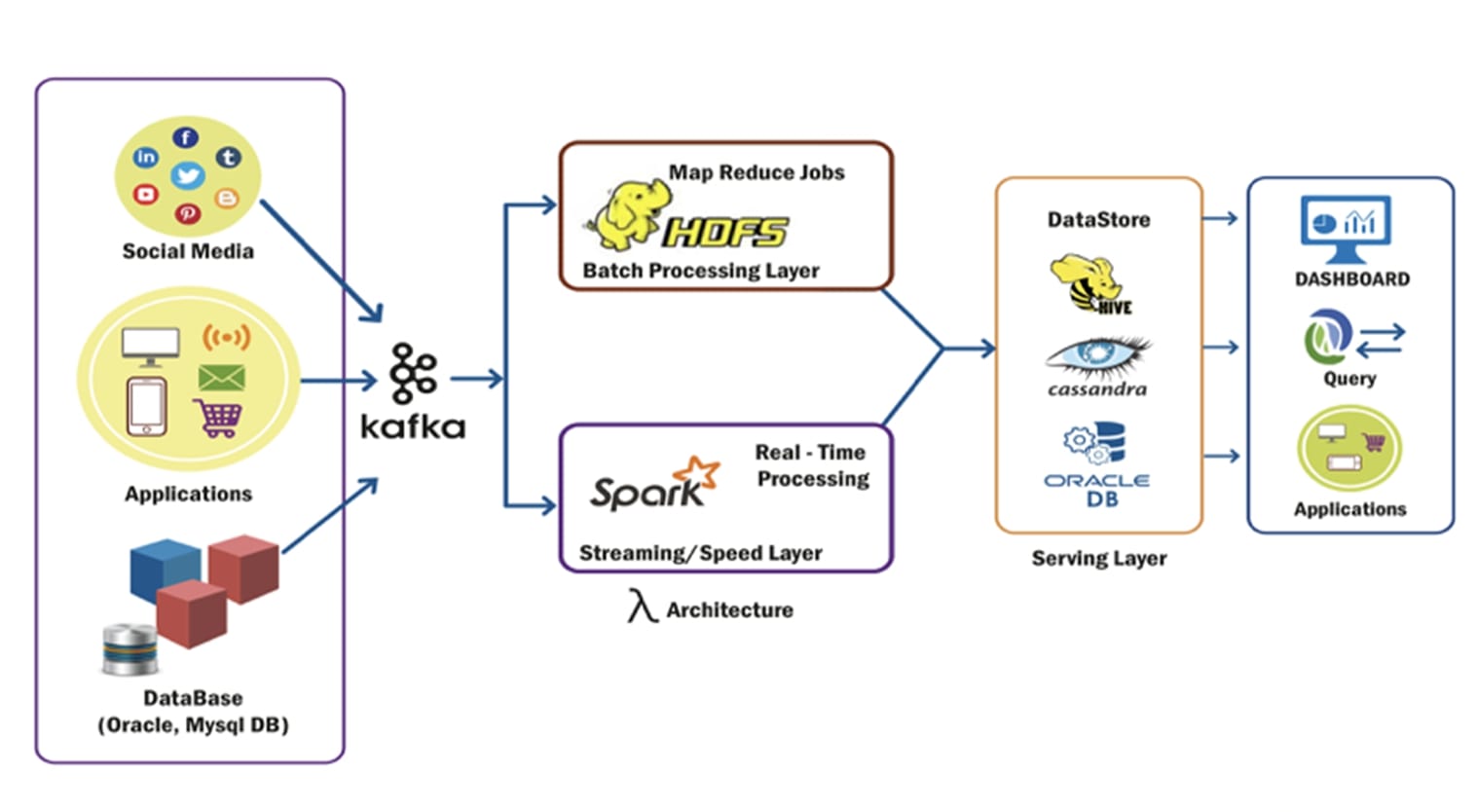

Data ingestion

Preparing data for modeling

Choosing right algorithms

Ranking and scoring data

Outlier analysis

TECHNIQUES

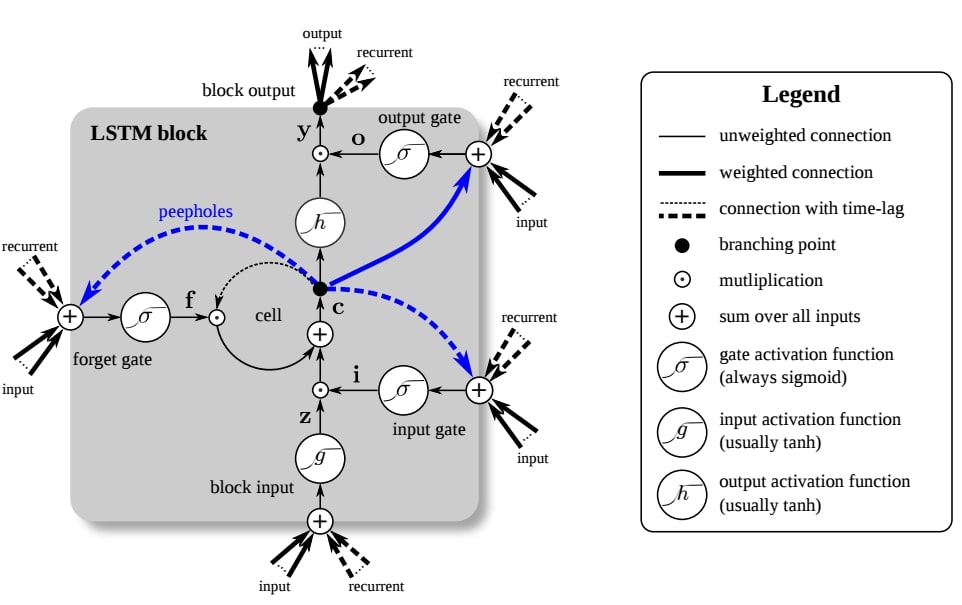

Data clustering, classification, regression

Graph modeling

Knowledge graphs

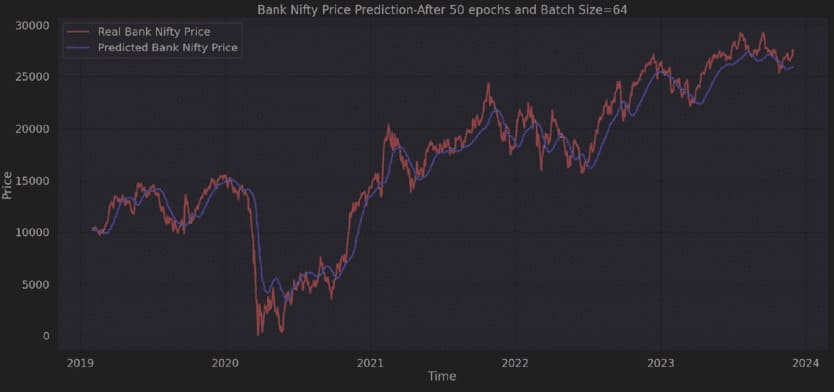

Time series modeling

Natural Language Processing

Generative AI : LLMs

Supervised and unsupervised trainings

SEGMENTS

COVERED

Supply Chain Analytics : Demand forecasting, stock analytics, supply chain management

Marketing Analytics : Personalization, Campaign Analytics, Social Media Analytics, Predictive Analytics

Customer Analytics : Customer 360 degree, Retention, Sentiment Analysis

MLOPS

AND AIOPS

Infrastructure and tools required for ML pipelines

Version control, model deployment, CI/CD

Detecting issues in IT operations

Deployment on AWS & Azure

EXPERT

ADVICE

Market research

Defining AI strategy

PoCs for ideas

Tools and compute resources selection

Cost and ROI calculation

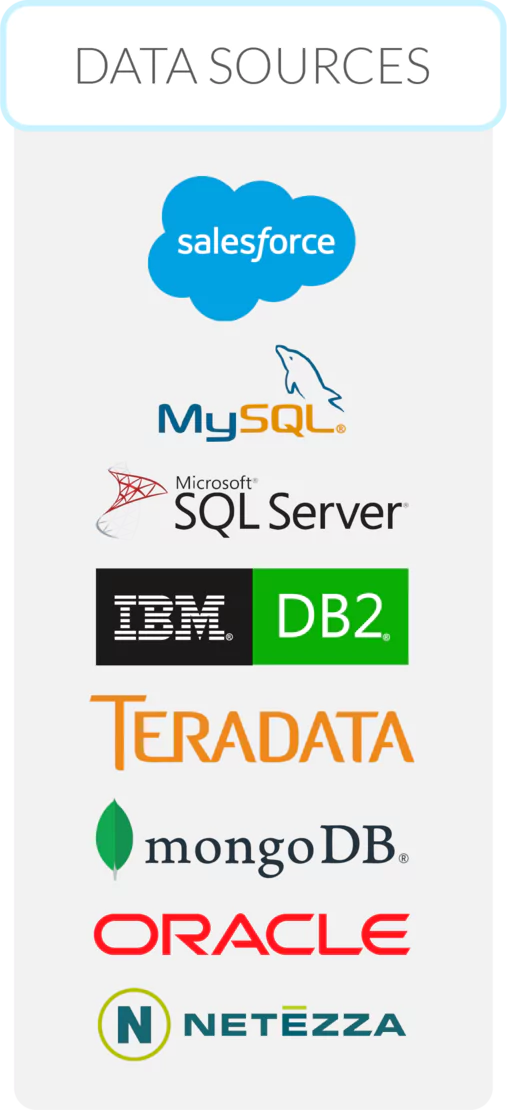

OUR TECH STACK

DATA TO

MODELS

With a deep understanding of techniques needed to build working ML models, we help users best predict the future outcomes. Our capabilities include:

Data wrangling, cleanup and feeding to ML pipelines

Writing ML models and their tuning using SAS, R, Python, pySpark and Spark ML tools

Applying algorithms for clustering, regression, centrality, closeness etc. as per requirement

Mapping the problems to RDBMS, VectorDB and GraphDB etc.

ML & AI

OPS

Whether it is open-source technologies or commercial ones, we help with optimal configuration of ML/AI training and inferencing pipelines. Values we provide:

-

Connecting models with the data sources

Version control, CI/CD, alerts and monitoring

-

Migrating pipelines from one setup to another

Cost and RoI trade-offs for on-prem versus cloud

GENERATIVE AI

SOLUTIONS

World of GenAI is perplexing to the user. With so many possibilities and plethora of choices to pick, confusion prevails. We help our customers with:

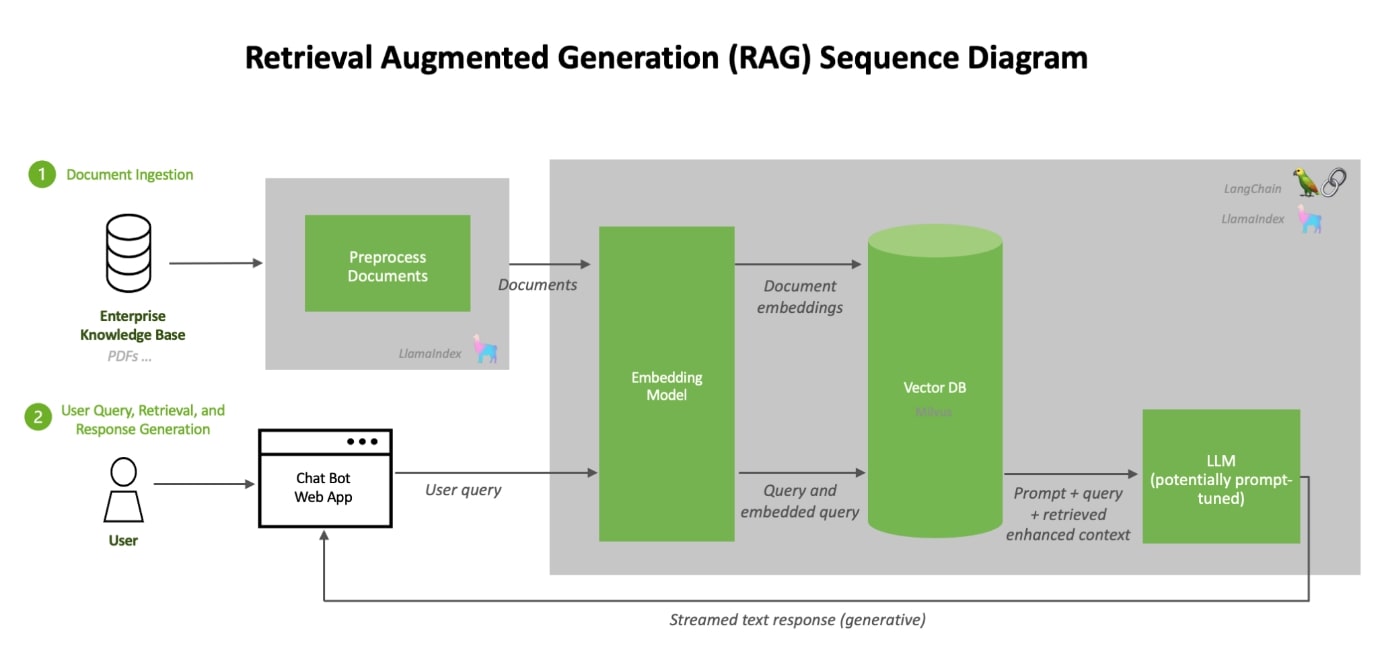

Picking the right LLM with trade-off on size versus accuracy

Deciding right vector embeddings for LLM of choice

Setting up RAG pipelines for incremental training

Manual training of LLMs

Performance tuning

GPU versus CPU tradeoffs

CUSTOMER CASE STUDIES

OUR BLOGS & WHITEPAPERS